Note

Go to the end to download the full example code.

Model probing of embedding estimators¶

This notebook will show you how to investigate the data representation given by an embedding estimator (such as SimCLR, y-Aware Contrastive Learning or Barlow Twins) during training and inference using the notion of “probing”. A standard machine learning model (e.g. linear or SVM) is trained and evaluated on the data embedding for a given task as the model is being fitted (for training monitoring) or at inference. It allows the user to understand what concepts are learned by the model.

This has been first introduced by Guillaume Alain and Yoshua Bengio in 2017 [1] to understand the internal behavior of a deep neural network along the different layers. This technique aimed at answering questions like: what is the intermediate representation of a neural network? What information is contained for a given layer ?

Then, it has been adapted to benchmark self-supervised vision models (like SimCLR, Barlow Twins, DINO, DINOv2, DINOv3) on classical datasets (ImageNet, CIFAR, …) by implementing linear probing and K-Nearest Neighbors probing on model’s output representation.

Setup¶

This notebook requires some packages besides nidl. Let’s first start with importing our standard libraries below:

import os

import re

import matplotlib.pyplot as plt

import numpy as np

import torch.nn.functional as func

from sklearn.base import BaseEstimator as sk_BaseEstimator

from sklearn.base import clone

from sklearn.linear_model import LogisticRegression, Ridge

from sklearn.metrics import (

accuracy_score,

f1_score,

make_scorer,

r2_score,

)

from tensorboard.backend.event_processing import event_accumulator

from torch import nn

from torch.utils.data import DataLoader

from torchvision import transforms

from torchvision.datasets import MNIST

from torchvision.ops import MLP

from torchvision.utils import make_grid

from nidl.callbacks import ModelProbingCallback

from nidl.estimators.probes import ModelProbing

from nidl.datasets import OpenBHB

from nidl.estimators.ssl import SimCLR, YAwareContrastiveLearning

from nidl.metrics import pearson_r

from nidl.transforms import MultiViewsTransform

We define some global parameters that will be used throughout the notebook:

data_dir = "/tmp/mnist"

batch_size = 128

num_workers = 10

latent_size = 32

Unsupervised Contrastive Learning on MNIST¶

For illustration purposes on how to use the probing callback, we will focus on the handwritten digits dataset MNIST. It contains 60k training images and 10k test images of size 28x28 pixels. Each image contains a digit from 0 to 9. It is rather small-scale compared to modern datasets like ImageNet but sufficient to illustrate the probing technique. We will train a SimCLR model on these data and probe the learned representation using a logistic regression classifier on the digit classification task. It will show how the data embedding evolves during training to become more linearly separable for each digit class.

We start by loading the MNIST dataset dataset with standard scaling transforms. These datasets are used for training and testing the probing.

scale_transforms = transforms.Compose(

[transforms.ToTensor(), transforms.Normalize((0.5,), (0.5,))]

)

train_xy_dataset = MNIST(data_dir, download=True, transform=scale_transforms)

test_xy_dataset = MNIST(

data_dir, download=True, train=False, transform=scale_transforms

)

0%| | 0.00/9.91M [00:00<?, ?B/s]

100%|██████████| 9.91M/9.91M [00:00<00:00, 104MB/s]

0%| | 0.00/28.9k [00:00<?, ?B/s]

100%|██████████| 28.9k/28.9k [00:00<00:00, 14.2MB/s]

0%| | 0.00/1.65M [00:00<?, ?B/s]

100%|██████████| 1.65M/1.65M [00:00<00:00, 70.7MB/s]

0%| | 0.00/4.54k [00:00<?, ?B/s]

100%|██████████| 4.54k/4.54k [00:00<00:00, 12.0MB/s]

Dataset and data augmentations for contrastive learning¶

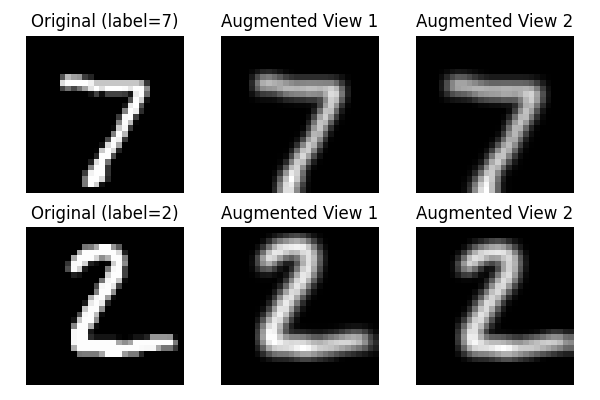

To perform contrastive learning, we need to define a set of data augmentations to create multiple views of the same image. Since we work with grayscale images, we will use random resized crop and Gaussian blur. We reduce the size of the Gaussian kernel to 3x3 since MNIST images are only 28x28 pixels.

contrast_transforms = transforms.Compose(

[

transforms.RandomResizedCrop(size=28, scale=(0.8, 1.0)),

transforms.GaussianBlur(kernel_size=3),

transforms.ToTensor(),

transforms.Normalize((0.5,), (0.5,)),

]

)

We create the datasets returning the augmented views for training the SSL models.

ssl_dataset = MNIST(

data_dir,

download=True,

transform=MultiViewsTransform(contrast_transforms, n_views=2),

)

test_ssl_dataset = MNIST(

data_dir,

download=True,

train=False,

transform=MultiViewsTransform(contrast_transforms, n_views=2),

)

And finally we create the data loaders for training and testing the models.

train_xy_loader = DataLoader(

train_xy_dataset,

batch_size=batch_size,

shuffle=True,

drop_last=False,

pin_memory=True,

num_workers=num_workers,

)

test_xy_loader = DataLoader(

test_xy_dataset,

batch_size=batch_size,

shuffle=False,

drop_last=False,

num_workers=num_workers,

)

train_ssl_loader = DataLoader(

ssl_dataset,

batch_size=batch_size,

shuffle=True,

pin_memory=True,

num_workers=num_workers,

)

test_ssl_loader = DataLoader(

test_ssl_dataset,

batch_size=batch_size,

shuffle=False,

pin_memory=True,

num_workers=num_workers,

)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:424: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

Before starting training the SimCLR model, let’s visualize some examples of the dataset.

def show_images(images, title=None, nrow=8):

grid = make_grid(images, nrow=nrow, normalize=True, pad_value=1)

plt.figure(figsize=(10, 5))

plt.imshow(grid.permute(1, 2, 0).cpu())

if title:

plt.title(title)

plt.axis("off")

plt.show()

# Original and augmented images

images, labels = next(iter(test_xy_loader))

augmented_views, _ = next(iter(test_ssl_loader))

view1, view2 = augmented_views[0], augmented_views[1]

fig, axes = plt.subplots(2, 3, figsize=(6, 4))

for i in range(2):

axes[i, 0].imshow(images[i][0].cpu(), cmap="gray")

axes[i, 0].set_title(f"Original (label={labels[i].item()})")

axes[i, 0].axis("off")

axes[i, 1].imshow(view1[i][0].cpu(), cmap="gray")

axes[i, 1].set_title("Augmented View 1")

axes[i, 1].axis("off")

axes[i, 2].imshow(view2[i][0].cpu(), cmap="gray")

axes[i, 2].set_title("Augmented View 2")

axes[i, 2].axis("off")

plt.tight_layout()

plt.show()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

SimCLR training with classification probing callback¶

We can now create the probing callback that will train a logistic regression classifier on the learned representation during SimCLR training. The probing is performed every 2 epochs on the training and test sets. The classification metrics (accuracy and f1-weighted) are logged to TensorBoard by default.

callback = ModelProbingCallback(

train_xy_loader,

test_xy_loader,

probe=LogisticRegression(max_iter=200),

scoring=["accuracy", "f1_weighted"],

every_n_train_epochs=3,

)

Since MNIST images are small, we can use a simple LeNet-like architecture as encoder for SimCLR, with few parameters. The output dimension of the encoder is set to 32, which is approximately 30 times smaller that the input, but larger than the number of input classes (10).

class LeNetEncoder(nn.Module):

def __init__(self, latent_size=32):

super().__init__()

self.latent_size = latent_size

self.conv1 = nn.Conv2d(1, 6, kernel_size=5, stride=1, padding=2)

self.pool1 = nn.AvgPool2d(2, 2)

self.conv2 = nn.Conv2d(6, 16, kernel_size=5)

self.pool2 = nn.AvgPool2d(2, 2)

self.fc1 = nn.Linear(16 * 5 * 5, 120)

self.fc2 = nn.Linear(120, 84)

self.fc3 = nn.Linear(84, latent_size)

def forward(self, x):

x = func.relu(self.conv1(x))

x = self.pool1(x)

x = func.relu(self.conv2(x))

x = self.pool2(x)

x = x.view(x.size(0), -1)

x = func.relu(self.fc1(x))

x = func.relu(self.fc2(x))

return self.fc3(x)

encoder = LeNetEncoder(latent_size)

We can now create the SimCLR model with the encoder and the probing callback. We limit the training to 10 epochs for the sake of time and because it is enough for checking the evolution of the embedding geometry across training.

model = SimCLR(

encoder=encoder,

limit_train_batches=100,

proj_input_dim=latent_size,

proj_hidden_dim=64,

proj_output_dim=32,

max_epochs=10,

temperature=0.1,

learning_rate=3e-4,

weight_decay=5e-5,

enable_checkpointing=False,

callbacks=callback, # <-- key part for probing

)

model.fit(train_ssl_loader, test_ssl_loader)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/pytorch_lightning/utilities/_pytree.py:21: `isinstance(treespec, LeafSpec)` is deprecated, use `isinstance(treespec, TreeSpec) and treespec.is_leaf()` instead.

┏━━━┳━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━┳━━━━━━━┳━━━━━━━┓

┃ ┃ Name ┃ Type ┃ Params ┃ Mode ┃ FLOPs ┃

┡━━━╇━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━╇━━━━━━━╇━━━━━━━┩

│ 0 │ encoder │ LeNetEncoder │ 63.6 K │ train │ 0 │

│ 1 │ projection_head │ SimCLRProjectionHead │ 4.2 K │ train │ 0 │

│ 2 │ loss │ InfoNCE │ 0 │ train │ 0 │

└───┴─────────────────┴──────────────────────┴────────┴───────┴───────┘

Trainable params: 67.8 K

Non-trainable params: 0

Total params: 67.8 K

Total estimated model params size (MB): 0

Modules in train mode: 14

Modules in eval mode: 0

Total FLOPs: 0

Extracting features: 0it [00:00, ?it/s]

Extracting features: 1it [00:00, 6.40it/s]

Extracting features: 8it [00:00, 35.78it/s]

Extracting features: 20it [00:00, 69.34it/s]

Extracting features: 29it [00:00, 74.11it/s]

Extracting features: 39it [00:00, 81.82it/s]

Extracting features: 50it [00:00, 89.91it/s]

Extracting features: 60it [00:00, 90.01it/s]

Extracting features: 70it [00:00, 89.52it/s]

Extracting features: 82it [00:01, 97.08it/s]

Extracting features: 92it [00:01, 95.49it/s]

Extracting features: 102it [00:01, 94.51it/s]

Extracting features: 112it [00:01, 94.74it/s]

Extracting features: 123it [00:01, 96.08it/s]

Extracting features: 133it [00:01, 96.68it/s]

Extracting features: 143it [00:01, 96.03it/s]

Extracting features: 153it [00:01, 96.82it/s]

Extracting features: 163it [00:01, 95.47it/s]

Extracting features: 173it [00:01, 93.88it/s]

Extracting features: 184it [00:02, 96.48it/s]

Extracting features: 194it [00:02, 97.17it/s]

Extracting features: 204it [00:02, 96.43it/s]

Extracting features: 214it [00:02, 96.77it/s]

Extracting features: 224it [00:02, 96.14it/s]

Extracting features: 236it [00:02, 100.92it/s]

Extracting features: 247it [00:02, 96.88it/s]

Extracting features: 257it [00:02, 96.73it/s]

Extracting features: 267it [00:02, 96.84it/s]

Extracting features: 278it [00:03, 98.06it/s]

Extracting features: 289it [00:03, 97.04it/s]

Extracting features: 300it [00:03, 98.06it/s]

Extracting features: 311it [00:03, 98.35it/s]

Extracting features: 321it [00:03, 96.34it/s]

Extracting features: 332it [00:03, 97.38it/s]

Extracting features: 342it [00:03, 97.35it/s]

Extracting features: 352it [00:03, 96.98it/s]

Extracting features: 362it [00:03, 95.67it/s]

Extracting features: 373it [00:04, 95.76it/s]

Extracting features: 383it [00:04, 95.56it/s]

Extracting features: 394it [00:04, 98.09it/s]

Extracting features: 405it [00:04, 100.97it/s]

Extracting features: 416it [00:04, 98.29it/s]

Extracting features: 426it [00:04, 94.35it/s]

Extracting features: 437it [00:04, 96.50it/s]

Extracting features: 447it [00:04, 94.70it/s]

Extracting features: 468it [00:04, 126.20it/s]

Extracting features: 0it [00:00, ?it/s]

Extracting features: 1it [00:00, 7.48it/s]

Extracting features: 8it [00:00, 38.77it/s]

Extracting features: 18it [00:00, 64.11it/s]

Extracting features: 29it [00:00, 78.87it/s]

Extracting features: 39it [00:00, 84.09it/s]

Extracting features: 49it [00:00, 86.04it/s]

Extracting features: 59it [00:00, 89.69it/s]

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

Extracting features: 0it [00:00, ?it/s]

Extracting features: 1it [00:00, 6.83it/s]

Extracting features: 11it [00:00, 50.90it/s]

Extracting features: 21it [00:00, 69.68it/s]

Extracting features: 31it [00:00, 80.46it/s]

Extracting features: 41it [00:00, 86.07it/s]

Extracting features: 51it [00:00, 88.78it/s]

Extracting features: 61it [00:00, 90.70it/s]

Extracting features: 71it [00:00, 90.68it/s]

Extracting features: 81it [00:00, 92.87it/s]

Extracting features: 91it [00:01, 91.61it/s]

Extracting features: 101it [00:01, 92.87it/s]

Extracting features: 112it [00:01, 96.21it/s]

Extracting features: 122it [00:01, 94.90it/s]

Extracting features: 133it [00:01, 97.62it/s]

Extracting features: 143it [00:01, 98.29it/s]

Extracting features: 153it [00:01, 95.56it/s]

Extracting features: 163it [00:01, 94.15it/s]

Extracting features: 173it [00:01, 92.82it/s]

Extracting features: 183it [00:02, 94.69it/s]

Extracting features: 193it [00:02, 95.53it/s]

Extracting features: 204it [00:02, 95.50it/s]

Extracting features: 214it [00:02, 95.56it/s]

Extracting features: 225it [00:02, 96.84it/s]

Extracting features: 236it [00:02, 99.25it/s]

Extracting features: 246it [00:02, 97.57it/s]

Extracting features: 257it [00:02, 98.05it/s]

Extracting features: 268it [00:02, 101.24it/s]

Extracting features: 279it [00:03, 99.89it/s]

Extracting features: 290it [00:03, 98.51it/s]

Extracting features: 300it [00:03, 98.89it/s]

Extracting features: 311it [00:03, 100.44it/s]

Extracting features: 322it [00:03, 102.84it/s]

Extracting features: 333it [00:03, 99.36it/s]

Extracting features: 344it [00:03, 100.68it/s]

Extracting features: 355it [00:03, 98.52it/s]

Extracting features: 365it [00:03, 96.19it/s]

Extracting features: 375it [00:04, 95.71it/s]

Extracting features: 385it [00:04, 95.68it/s]

Extracting features: 395it [00:04, 93.06it/s]

Extracting features: 405it [00:04, 94.76it/s]

Extracting features: 415it [00:04, 95.42it/s]

Extracting features: 425it [00:04, 96.55it/s]

Extracting features: 435it [00:04, 95.67it/s]

Extracting features: 445it [00:04, 96.53it/s]

Extracting features: 464it [00:04, 122.54it/s]

Extracting features: 0it [00:00, ?it/s]

Extracting features: 1it [00:00, 9.64it/s]

Extracting features: 5it [00:00, 27.20it/s]

Extracting features: 15it [00:00, 57.47it/s]

Extracting features: 26it [00:00, 77.19it/s]

Extracting features: 37it [00:00, 87.94it/s]

Extracting features: 47it [00:00, 91.80it/s]

Extracting features: 57it [00:00, 93.47it/s]

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/sklearn/linear_model/_logistic.py:470: ConvergenceWarning: lbfgs failed to converge after 200 iteration(s) (status=1):

STOP: TOTAL NO. OF ITERATIONS REACHED LIMIT

Increase the number of iterations to improve the convergence (max_iter=200).

You might also want to scale the data as shown in:

https://scikit-learn.org/stable/modules/preprocessing.html

Please also refer to the documentation for alternative solver options:

https://scikit-learn.org/stable/modules/linear_model.html#logistic-regression

n_iter_i = _check_optimize_result(

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

Extracting features: 0it [00:00, ?it/s]

Extracting features: 1it [00:00, 6.99it/s]

Extracting features: 11it [00:00, 52.16it/s]

Extracting features: 22it [00:00, 74.66it/s]

Extracting features: 31it [00:00, 79.92it/s]

Extracting features: 43it [00:00, 89.90it/s]

Extracting features: 53it [00:00, 88.45it/s]

Extracting features: 63it [00:00, 88.71it/s]

Extracting features: 73it [00:00, 90.81it/s]

Extracting features: 84it [00:01, 94.02it/s]

Extracting features: 95it [00:01, 97.95it/s]

Extracting features: 105it [00:01, 92.80it/s]

Extracting features: 116it [00:01, 92.60it/s]

Extracting features: 127it [00:01, 96.13it/s]

Extracting features: 138it [00:01, 97.64it/s]

Extracting features: 149it [00:01, 98.76it/s]

Extracting features: 159it [00:01, 98.38it/s]

Extracting features: 169it [00:01, 97.25it/s]

Extracting features: 180it [00:01, 99.71it/s]

Extracting features: 191it [00:02, 101.68it/s]

Extracting features: 202it [00:02, 98.72it/s]

Extracting features: 213it [00:02, 99.17it/s]

Extracting features: 223it [00:02, 97.13it/s]

Extracting features: 233it [00:02, 96.72it/s]

Extracting features: 244it [00:02, 96.28it/s]

Extracting features: 254it [00:02, 95.26it/s]

Extracting features: 264it [00:02, 95.26it/s]

Extracting features: 275it [00:02, 96.81it/s]

Extracting features: 285it [00:03, 94.34it/s]

Extracting features: 296it [00:03, 94.47it/s]

Extracting features: 306it [00:03, 95.74it/s]

Extracting features: 317it [00:03, 96.50it/s]

Extracting features: 328it [00:03, 98.16it/s]

Extracting features: 338it [00:03, 97.83it/s]

Extracting features: 348it [00:03, 95.80it/s]

Extracting features: 358it [00:03, 96.01it/s]

Extracting features: 368it [00:03, 93.03it/s]

Extracting features: 379it [00:04, 95.08it/s]

Extracting features: 389it [00:04, 93.45it/s]

Extracting features: 402it [00:04, 99.76it/s]

Extracting features: 412it [00:04, 98.10it/s]

Extracting features: 422it [00:04, 98.23it/s]

Extracting features: 432it [00:04, 94.01it/s]

Extracting features: 443it [00:04, 97.60it/s]

Extracting features: 457it [00:04, 109.28it/s]

Extracting features: 0it [00:00, ?it/s]

Extracting features: 1it [00:00, 7.36it/s]

Extracting features: 10it [00:00, 47.94it/s]

Extracting features: 21it [00:00, 72.66it/s]

Extracting features: 31it [00:00, 81.46it/s]

Extracting features: 42it [00:00, 91.04it/s]

Extracting features: 52it [00:00, 92.86it/s]

Extracting features: 67it [00:00, 110.44it/s]

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/sklearn/linear_model/_logistic.py:470: ConvergenceWarning: lbfgs failed to converge after 200 iteration(s) (status=1):

STOP: TOTAL NO. OF ITERATIONS REACHED LIMIT

Increase the number of iterations to improve the convergence (max_iter=200).

You might also want to scale the data as shown in:

https://scikit-learn.org/stable/modules/preprocessing.html

Please also refer to the documentation for alternative solver options:

https://scikit-learn.org/stable/modules/linear_model.html#logistic-regression

n_iter_i = _check_optimize_result(

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

Extracting features: 0it [00:00, ?it/s]

Extracting features: 1it [00:00, 7.60it/s]

Extracting features: 8it [00:00, 39.77it/s]

Extracting features: 19it [00:00, 69.01it/s]

Extracting features: 28it [00:00, 74.61it/s]

Extracting features: 38it [00:00, 81.40it/s]

Extracting features: 49it [00:00, 90.22it/s]

Extracting features: 59it [00:00, 93.03it/s]

Extracting features: 70it [00:00, 97.08it/s]

Extracting features: 80it [00:00, 97.96it/s]

Extracting features: 92it [00:01, 103.90it/s]

Extracting features: 103it [00:01, 97.99it/s]

Extracting features: 113it [00:01, 97.73it/s]

Extracting features: 123it [00:01, 97.09it/s]

Extracting features: 133it [00:01, 97.29it/s]

Extracting features: 143it [00:01, 97.04it/s]

Extracting features: 154it [00:01, 99.26it/s]

Extracting features: 165it [00:01, 99.09it/s]

Extracting features: 175it [00:01, 96.33it/s]

Extracting features: 185it [00:02, 96.51it/s]

Extracting features: 195it [00:02, 94.69it/s]

Extracting features: 205it [00:02, 94.84it/s]

Extracting features: 215it [00:02, 94.88it/s]

Extracting features: 226it [00:02, 97.04it/s]

Extracting features: 236it [00:02, 92.99it/s]

Extracting features: 247it [00:02, 97.31it/s]

Extracting features: 257it [00:02, 95.30it/s]

Extracting features: 267it [00:02, 93.04it/s]

Extracting features: 277it [00:03, 94.90it/s]

Extracting features: 288it [00:03, 96.80it/s]

Extracting features: 299it [00:03, 98.70it/s]

Extracting features: 309it [00:03, 96.22it/s]

Extracting features: 319it [00:03, 95.83it/s]

Extracting features: 329it [00:03, 94.66it/s]

Extracting features: 340it [00:03, 96.99it/s]

Extracting features: 350it [00:03, 97.78it/s]

Extracting features: 360it [00:03, 96.15it/s]

Extracting features: 372it [00:03, 100.97it/s]

Extracting features: 383it [00:04, 97.49it/s]

Extracting features: 393it [00:04, 97.78it/s]

Extracting features: 403it [00:04, 96.42it/s]

Extracting features: 413it [00:04, 94.62it/s]

Extracting features: 423it [00:04, 95.42it/s]

Extracting features: 434it [00:04, 99.59it/s]

Extracting features: 444it [00:04, 94.38it/s]

Extracting features: 460it [00:04, 111.62it/s]

Extracting features: 0it [00:00, ?it/s]

Extracting features: 2it [00:00, 14.69it/s]

Extracting features: 9it [00:00, 42.35it/s]

Extracting features: 21it [00:00, 74.03it/s]

Extracting features: 30it [00:00, 79.16it/s]

Extracting features: 40it [00:00, 84.85it/s]

Extracting features: 50it [00:00, 89.41it/s]

Extracting features: 64it [00:00, 104.16it/s]

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/sklearn/linear_model/_logistic.py:470: ConvergenceWarning: lbfgs failed to converge after 200 iteration(s) (status=1):

STOP: TOTAL NO. OF ITERATIONS REACHED LIMIT

Increase the number of iterations to improve the convergence (max_iter=200).

You might also want to scale the data as shown in:

https://scikit-learn.org/stable/modules/preprocessing.html

Please also refer to the documentation for alternative solver options:

https://scikit-learn.org/stable/modules/linear_model.html#logistic-regression

n_iter_i = _check_optimize_result(

Epoch 9/9 ━━━━━━━━━━━━━━━━ 100/100 0:00:09 • 12.94it/s v_num: 0.000

0:00:00 loss/train: 0.632

loss/val: 1.087

test_accuracy:

0.885

test_f1_weighted:

0.885

SimCLR(

(encoder): LeNetEncoder(

(conv1): Conv2d(1, 6, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(pool1): AvgPool2d(kernel_size=2, stride=2, padding=0)

(conv2): Conv2d(6, 16, kernel_size=(5, 5), stride=(1, 1))

(pool2): AvgPool2d(kernel_size=2, stride=2, padding=0)

(fc1): Linear(in_features=400, out_features=120, bias=True)

(fc2): Linear(in_features=120, out_features=84, bias=True)

(fc3): Linear(in_features=84, out_features=32, bias=True)

)

(projection_head): SimCLRProjectionHead(

(layers): Sequential(

(0): Linear(in_features=32, out_features=64, bias=True)

(1): ReLU()

(2): Linear(in_features=64, out_features=32, bias=True)

)

)

(loss): InfoNCE(temperature=0.1)

)

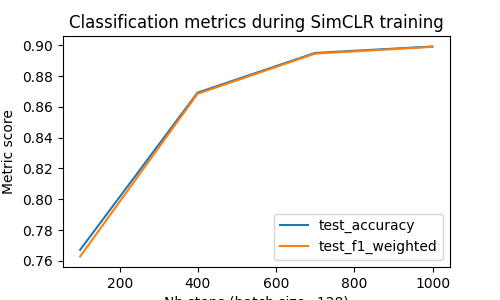

Visualization of the classification metrics during training¶

After training, we can visualize the classification metrics logged by the linear probe using TensorBoard. The logged metrics are stored in the lightning_logs folder by default. They contain the accuracy, and f1-weighted scores.

def get_last_log_version(logs_dir="lightning_logs"):

versions = []

for d in os.listdir(logs_dir):

match = re.match(r"version_(\d+)", d)

if match:

versions.append(int(match.group(1)))

return max(versions) if versions else None

log_dir = f"lightning_logs/version_{get_last_log_version()}/"

ea = event_accumulator.EventAccumulator(log_dir)

ea.Reload()

metrics = [

"test_accuracy",

"test_f1_weighted",

]

scalars = {m: ea.Scalars(m) for m in metrics}

Once all the metrics are loaded, we plot them as the number of training steps increases:

plt.figure(figsize=(5, 3))

for m, events in scalars.items():

steps = [e.step for e in events]

values = [e.value for e in events]

plt.plot(steps, values, label=m)

plt.xlabel(f"Nb steps (batch size={batch_size})")

plt.ylabel("Metric score")

plt.title("Classification metrics during SimCLR training")

plt.legend()

plt.tight_layout()

plt.show()

Observations: we can see that the classification metrics increase steadily during training, showing that the learned representation becomes more and more linearly separable for the digit classes. The accuracy reaches more than 80% after 10 epochs, which is quite good for such a simple model trained without supervision and a small number of epochs.

Classification metrics at inference¶

In addition to monitoring the classification metrics during training, we can also evaluate the linear probe at inference after training the SimCLR model.

probing = ModelProbing(

embedding_estimator=model, # <-- pass the trained SimCLR model as embedding estimator

probe=LogisticRegression(max_iter=200),

scoring=["accuracy", "f1_weighted"],

)

probing.fit(train_xy_loader)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/pytorch_lightning/utilities/_pytree.py:21: `isinstance(treespec, LeafSpec)` is deprecated, use `isinstance(treespec, TreeSpec) and treespec.is_leaf()` instead.

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/pytorch_lightning/trainer/connectors/data_connector.py:485: Your `predict_dataloader`'s sampler has shuffling enabled, it is strongly recommended that you turn shuffling off for val/test dataloaders.

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:424: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

Predicting ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 469/469 0:00:04 • 0:00:00 102.71it/s

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/sklearn/linear_model/_logistic.py:470: ConvergenceWarning: lbfgs failed to converge after 200 iteration(s) (status=1):

STOP: TOTAL NO. OF ITERATIONS REACHED LIMIT

Increase the number of iterations to improve the convergence (max_iter=200).

You might also want to scale the data as shown in:

https://scikit-learn.org/stable/modules/preprocessing.html

Please also refer to the documentation for alternative solver options:

https://scikit-learn.org/stable/modules/linear_model.html#logistic-regression

n_iter_i = _check_optimize_result(

ModelProbing(

(embedding_estimator): SimCLR(

(encoder): LeNetEncoder(

(conv1): Conv2d(1, 6, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(pool1): AvgPool2d(kernel_size=2, stride=2, padding=0)

(conv2): Conv2d(6, 16, kernel_size=(5, 5), stride=(1, 1))

(pool2): AvgPool2d(kernel_size=2, stride=2, padding=0)

(fc1): Linear(in_features=400, out_features=120, bias=True)

(fc2): Linear(in_features=120, out_features=84, bias=True)

(fc3): Linear(in_features=84, out_features=32, bias=True)

)

(projection_head): SimCLRProjectionHead(

(layers): Sequential(

(0): Linear(in_features=32, out_features=64, bias=True)

(1): ReLU()

(2): Linear(in_features=64, out_features=32, bias=True)

)

)

(loss): InfoNCE(temperature=0.1)

)

)

We can now evaluate the probe on the test set and print the classification metrics:

scores = probing.score(test_xy_loader)

print("Classification metrics at inference:")

for metric, score in scores.items():

print(f"{metric}: {score:.4f}")

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/pytorch_lightning/utilities/_pytree.py:21: `isinstance(treespec, LeafSpec)` is deprecated, use `isinstance(treespec, TreeSpec) and treespec.is_leaf()` instead.

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:424: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

Predicting ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 79/79 0:00:00 • 0:00:00 108.08it/s

Classification metrics at inference:

accuracy: 0.8849

f1_weighted: 0.8845

Probing of y-Aware representation on age and sex prediction¶

We have previously seen a simple case where only one classification task is being monitored during training. We can also monitor a mixed of classification and regression tasks at the same time during training of an embedding model. This could be useful if several target variables should be monitored from the representation. We will show how to perform this with nidl using the ModelProbing callback on the OpenBHB dataset to monitor age and sex decoding from brain imaging data. We refer to the example on OpenBHB for more details on this neuroimaging dataset.

We define the relevant global parameters for this example:

data_dir = "/tmp/openBHB"

batch_size = 128

num_workers = 10

latent_size = 32

OpenBHB dataset and data augmentations¶

We consider the gray matter and CSF volumes on some regions of interests in the Neuromorphometrics atlas across subjects in OpenBHB (“vbm_roi” modality). These data are tabular (not images) but they are still well suited for contrastive learning and they are very light compared to the raw images (284-d vector for each subject). We start by loading these data for training and testing the probing callback. The target variables are age (regression) and sex (classification).

def target_transforms(labels):

return np.array([labels["age"], labels["sex"] == "female"])

train_xy_dataset = OpenBHB(

data_dir,

modality="vbm_roi",

target=["age", "sex"],

transforms=lambda x: x.flatten(),

target_transforms=target_transforms,

streaming=False,

)

test_xy_dataset = OpenBHB(

data_dir,

modality="vbm_roi",

split="val",

target=["age", "sex"],

transforms=lambda x: x.flatten(),

target_transforms=target_transforms,

streaming=False,

)

Fetching ... files: 0it [00:00, ?it/s]

Fetching ... files: 1it [00:00, 7796.10it/s]

Fetching ... files: 0it [00:00, ?it/s]

Fetching ... files: 1it [00:00, 15087.42it/s]

To perform contrastive learning, we will use random masking and Gaussian noise as data augmentations. These are well suited for tabular data. We will train a y-Aware Contrastive Learning model on these data, using age as auxiliary variable.

mask_prob = 0.8

noise_std = 0.5

contrast_transforms = transforms.Compose(

[

lambda x: x.flatten(),

lambda x: (np.random.rand(*x.shape) > mask_prob).astype(np.float32)

* x, # random masking

lambda x: x

+ (

(np.random.rand() > 0.5) * np.random.randn(*x.shape) * noise_std

).astype(np.float32), # random Gaussian noise

]

)

ssl_dataset = OpenBHB(

data_dir,

modality="vbm_roi",

target="age",

transforms=MultiViewsTransform(contrast_transforms, n_views=2),

)

test_ssl_dataset = OpenBHB(

data_dir,

modality="vbm_roi",

target="age",

split="val",

transforms=MultiViewsTransform(contrast_transforms, n_views=2),

)

As before, we create the data loaders for training and testing the models.

train_xy_loader = DataLoader(

train_xy_dataset,

batch_size=batch_size,

shuffle=True,

drop_last=False,

pin_memory=True,

num_workers=num_workers,

)

test_xy_loader = DataLoader(

test_xy_dataset,

batch_size=batch_size,

shuffle=False,

drop_last=False,

num_workers=num_workers,

)

train_ssl_loader = DataLoader(

ssl_dataset,

batch_size=batch_size,

shuffle=True,

pin_memory=True,

num_workers=num_workers,

)

test_ssl_loader = DataLoader(

test_ssl_dataset,

batch_size=batch_size,

shuffle=False,

pin_memory=True,

num_workers=num_workers,

)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:424: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

y-Aware CL training with multitask probing callback¶

Next, we create the multitask probing callback that will train a ridge regression on age and a logistic regression classifier on sex. The probing is performed every epoch on the training and test sets. The metrics are logged to TensorBoard by default.

To do so, we need to create a meta-estimator (compatible with scikit-learn) that wraps the two estimators (ridge and logistic regression) and handles the mixed regression/classification tasks. We provide such a meta-estimator called MultiTaskEstimator below.

class MultiTaskEstimator(sk_BaseEstimator):

"""

A meta-estimator that wraps a list of sklearn estimators

for multi-task problems (mixed regression/classification).

"""

def __init__(self, estimators):

self.estimators = estimators

def fit(self, X, y):

"""Fit each estimator on its corresponding column in y."""

y = np.asarray(y)

if y.ndim == 1:

y = y.reshape(-1, 1)

self.estimators_ = []

for i, est in enumerate(self.estimators):

fitted = clone(est).fit(X, y[:, i])

self.estimators_.append(fitted)

return self

def predict(self, X):

"""Predict for each task."""

preds = [est.predict(X).reshape(-1, 1) for est in self.estimators_]

return np.hstack(preds)

def score(self, X, y):

"""Average score across all tasks."""

y = np.asarray(y)

scores = []

for i, est in enumerate(self.estimators_):

scores.append(est.score(X, y[:, i]))

return np.mean(scores)

def __len__(self):

return len(self.estimators)

Then, we define a scorer specific for each task:

def make_task_scorer(metric_fn, task_index, **kwargs):

"""Returns a scorer evaluating on y or y[:, task_index]."""

def scorer(y_true, y_pred):

if task_index is None:

return metric_fn(y_true, y_pred)

else:

return metric_fn(y_true[:, task_index], y_pred[:, task_index])

return make_scorer(scorer, **kwargs)

Finally, we create the multitask probing callback with the relevant estimators and scorers for age and sex.

callback = ModelProbingCallback(

train_xy_loader,

test_xy_loader,

probe=MultiTaskEstimator([Ridge(), LogisticRegression(max_iter=200)]),

scoring={

"age/r2": make_task_scorer(r2_score, task_index=0),

"age/pearsonr": make_task_scorer(pearson_r, task_index=0),

"sex/accuracy": make_task_scorer(accuracy_score, task_index=1),

"sex/f1": make_task_scorer(f1_score, task_index=1),

},

every_n_train_epochs=3,

)

Since we work with tabular data, we can use a simple MLP as encoder for y-Aware Contrastive Learning. The input dimension is 284 and we compress the data to a 32-d latent space.

encoder = MLP(in_channels=284, hidden_channels=[64, latent_size])

We can now create the y-Aware Contrastive Learning model with the MLP encoder and the multitask probing callback. We limit the training to 10 epochs for the sake of time and we use a small bandwidth for the Gaussian kernel in the y-Aware model compared to the variance of the age in OpenBHB (sigma=4).

sigma = 4

model = YAwareContrastiveLearning(

encoder=encoder,

proj_input_dim=latent_size,

proj_hidden_dim=2 * latent_size,

proj_output_dim=latent_size,

bandwidth=sigma**2,

max_epochs=10,

temperature=0.1,

learning_rate=1e-3,

enable_checkpointing=False,

callbacks=callback, # <-- add callback to monitor the training

)

model.fit(train_ssl_loader, test_ssl_loader)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/pytorch_lightning/utilities/_pytree.py:21: `isinstance(treespec, LeafSpec)` is deprecated, use `isinstance(treespec, TreeSpec) and treespec.is_leaf()` instead.

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/pytorch_lightning/loops/fit_loop.py:317: The number of training batches (26) is smaller than the logging interval Trainer(log_every_n_steps=50). Set a lower value for log_every_n_steps if you want to see logs for the training epoch.

┏━━━┳━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━┳━━━━━━━┳━━━━━━━┓

┃ ┃ Name ┃ Type ┃ Params ┃ Mode ┃ FLOPs ┃

┡━━━╇━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━╇━━━━━━━╇━━━━━━━┩

│ 0 │ encoder │ MLP │ 20.3 K │ train │ 0 │

│ 1 │ projection_head │ YAwareProjectionHead │ 4.2 K │ train │ 0 │

│ 2 │ loss │ YAwareInfoNCE │ 0 │ train │ 0 │

└───┴─────────────────┴──────────────────────┴────────┴───────┴───────┘

Trainable params: 24.5 K

Non-trainable params: 0

Total params: 24.5 K

Total estimated model params size (MB): 0

Modules in train mode: 13

Modules in eval mode: 0

Total FLOPs: 0

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

Extracting features: 0it [00:00, ?it/s]

Extracting features: 1it [00:04, 4.48s/it]

Extracting features: 2it [00:06, 3.30s/it]

Extracting features: 7it [00:07, 1.53it/s]

Extracting features: 12it [00:09, 1.64it/s]

Extracting features: 15it [00:10, 2.21it/s]

Extracting features: 22it [00:10, 3.74it/s]

Extracting features: 0it [00:00, ?it/s]

Extracting features: 1it [00:02, 2.40s/it]

Extracting features: 2it [00:02, 1.23s/it]

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

Extracting features: 0it [00:00, ?it/s]

Extracting features: 1it [00:04, 4.67s/it]

Extracting features: 2it [00:05, 2.48s/it]

Extracting features: 4it [00:06, 1.08s/it]

Extracting features: 5it [00:06, 1.28it/s]

Extracting features: 11it [00:08, 1.90it/s]

Extracting features: 12it [00:09, 1.83it/s]

Extracting features: 21it [00:10, 4.37it/s]

Extracting features: 22it [00:10, 3.48it/s]

Extracting features: 24it [00:10, 4.22it/s]

Extracting features: 0it [00:00, ?it/s]

Extracting features: 1it [00:02, 2.66s/it]

Extracting features: 2it [00:02, 1.17s/it]

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

Extracting features: 0it [00:00, ?it/s]

Extracting features: 1it [00:05, 5.82s/it]

Extracting features: 5it [00:05, 1.12it/s]

Extracting features: 8it [00:06, 1.89it/s]

Extracting features: 11it [00:08, 1.54it/s]

Extracting features: 21it [00:10, 3.29it/s]

Extracting features: 0it [00:00, ?it/s]

Extracting features: 1it [00:02, 2.28s/it]

Extracting features: 2it [00:02, 1.18s/it]

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/sklearn/linear_model/_logistic.py:470: ConvergenceWarning: lbfgs failed to converge after 200 iteration(s) (status=1):

STOP: TOTAL NO. OF ITERATIONS REACHED LIMIT

Increase the number of iterations to improve the convergence (max_iter=200).

You might also want to scale the data as shown in:

https://scikit-learn.org/stable/modules/preprocessing.html

Please also refer to the documentation for alternative solver options:

https://scikit-learn.org/stable/modules/linear_model.html#logistic-regression

n_iter_i = _check_optimize_result(

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:432: UserWarning: This DataLoader will create 10 worker processes in total. Our suggested max number of worker in current system is 4, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

self.check_worker_number_rationality()

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/torch/utils/data/dataloader.py:1118: UserWarning: 'pin_memory' argument is set as true but no accelerator is found, then device pinned memory won't be used.

super().__init__(loader)

Extracting features: 0it [00:00, ?it/s]

Extracting features: 1it [00:05, 5.90s/it]

Extracting features: 4it [00:06, 1.22s/it]

Extracting features: 11it [00:09, 1.61it/s]

Extracting features: 21it [00:10, 3.17it/s]

Extracting features: 0it [00:00, ?it/s]

Extracting features: 1it [00:02, 2.50s/it]

Extracting features: 4it [00:02, 1.96it/s]

Extracting features: 6it [00:02, 3.13it/s]

/opt/hostedtoolcache/Python/3.12.13/x64/lib/python3.12/site-packages/sklearn/linear_model/_logistic.py:470: ConvergenceWarning: lbfgs failed to converge after 200 iteration(s) (status=1):

STOP: TOTAL NO. OF ITERATIONS REACHED LIMIT

Increase the number of iterations to improve the convergence (max_iter=200).

You might also want to scale the data as shown in:

https://scikit-learn.org/stable/modules/preprocessing.html

Please also refer to the documentation for alternative solver options:

https://scikit-learn.org/stable/modules/linear_model.html#logistic-regression

n_iter_i = _check_optimize_result(

Epoch 9/9 ━━━━━━━━━━━━━━━━━ 26/26 0:00:10 • 0:00:00 4.40it/s v_num: 3.000

loss/train: 7.944

loss/val: 11.054

test_age/r2: 0.621

test_age/pearsonr:

0.793

test_sex/accuracy:

0.761 test_sex/f1:

0.743

YAwareContrastiveLearning(

(encoder): MLP(

(0): Linear(in_features=284, out_features=64, bias=True)

(1): ReLU()

(2): Dropout(p=0.0, inplace=False)

(3): Linear(in_features=64, out_features=32, bias=True)

(4): Dropout(p=0.0, inplace=False)

)

(projection_head): YAwareProjectionHead(

(layers): Sequential(

(0): Linear(in_features=32, out_features=64, bias=True)

(1): ReLU()

(2): Linear(in_features=64, out_features=32, bias=True)

)

)

(loss): YAwareInfoNCE(

(sim_metric): PairwiseCosineSimilarity()

)

)

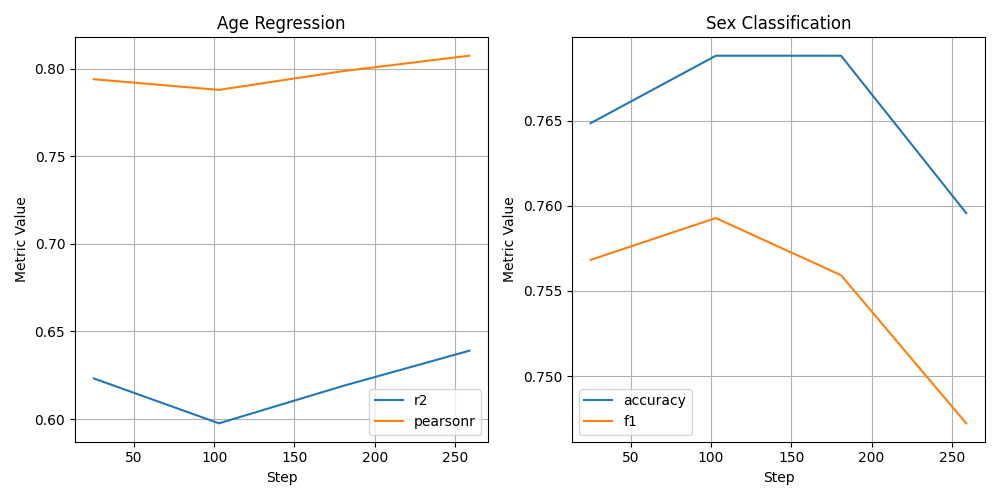

Visualization of the classification and regression metrics during training¶

After training, we can visualize the classification and regression metrics logged by the model probing using TensorBoard. The logged metrics are stored in the lightning_logs folder by default.

Once all the metrics are loaded, we plot them as the number of training steps increases. We create two subplots, one for each task (age regression and sex classification).

def plot_task(ax, task_metrics, title):

for m in task_metrics:

steps = [s.step for s in scalars[m]]

values = [s.value for s in scalars[m]]

ax.plot(steps, values, label=m.split("/")[1])

ax.set_title(title)

ax.set_xlabel("Step")

ax.set_ylabel("Metric Value")

ax.legend()

ax.grid(True)

fig, axes = plt.subplots(1, 2, figsize=(10, 5))

plot_task(axes[0], ["test_age/r2", "test_age/pearsonr"], "Age Regression")

plot_task(axes[1], ["test_sex/accuracy", "test_sex/f1"], "Sex Classification")

plt.tight_layout()

plt.show()

Conclusions¶

In this notebook, we have shown how to use the model probing callbacks available in nidl to monitor the evolution of the data representation during training of embedding models such as SimCLR and y-Aware Contrastive Learning. We have seen how to use the ModelProbing callback for single-task probing and multi-task probing. These callbacks allow to train standard machine learning models (e.g. logistic regression, ridge regression, SVM) on the learned representation at regular intervals during training and log the relevant metrics to TensorBoard. This provides insights on what concepts are being learned by the model and how the representation evolves to become more suitable for downstream tasks.

Total running time of the script: (7 minutes 32.988 seconds)

Estimated memory usage: 273 MB