Note

This page is a reference documentation. It only explains the function signature, and not how to use it. Please refer to the user guide for the big picture.

nidl.metrics.uniformity_score¶

- nidl.metrics.uniformity_score(z, normalize=True, t=2.0, eps=1e-12)[source]¶

Compute the uniformity score of an embedding [1]

This metric measures how uniform the embedding vectors are distributed on the unit hypersphere. Lower values = more uniform distribution.

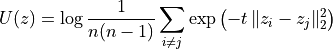

It is defined as the log of the expected Gaussian potential over all non-identical pairs:

where all vectors are first normalized to lie on the unit hypersphere.

Lower uniformity values = more uniform distribution, which is generally considered better for contrastive representation learning.

- Parameters:

- z: torch.Tensor or np.ndarray, shape (n_samples, n_features)

The embedding vectors.

If a NumPy array is provided, computation is performed in NumPy.

If a torch.Tensor is provided, computation is performed in PyTorch and the returned value is a torch.Tensor scalar.

- normalize: bool, default=True

If True, each vector is L2-normalized before computing the uniformity, as done in contrastive methods that operate on the unit hypersphere (SimCLR, MoCo, etc.).

- t: float, default=2.0

Temperature parameter controlling the sharpness of the Gaussian kernel. t = 2 corresponds to the original definition in [1].

- eps: float, default=1e-12

Small value added to avoid division by zero.

- Returns:

- scoretorch.Tensor or numpy scalar

The uniformity score.

PyTorch input → returns a 0-dim torch.Tensor

NumPy input → returns a numpy.float64

References

Examples using nidl.metrics.uniformity_score¶

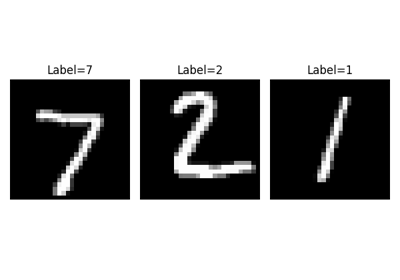

Visualization of metrics during training of PyTorch-Lightning models